There are many great books on the impact of technological advancement on the global economy and the world at large.

The Second Machine Age by Andrew McAfee and Erik Brynjolfsson, published in 2014, is probably among the most eloquent and widely read of these.

Often books like these conclude with the reassuring message that machines are not going to take your job.

Remember what happened in chess? Great machines could beat great grand masters, but an amateur player combined with an average machine could beat either of them. People will always be needed.

And that is where most of these books stop.

But what does this collaboration between people and machines look like? And importantly for our readers, what impact might it have on the investment industry?

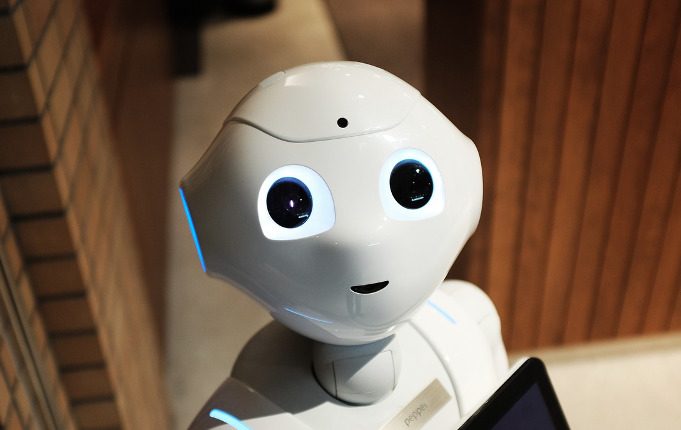

Interacting with Machines

Simon Russell is a former investment banker and asset consultant, who founded Behavioural Finance Australia in 2014 to focus on decision-making processes in the asset management industry.

In his new book, Cyborg: How to Optimally Integrate Human and Machine Investment Decision-making, Russell picks up where most books end and describes various models of what a collaboration between machines and investment professionals might look like.

Russell plays with the ambiguity many people feel towards a future collaboration with machines in the title of his book.

The term ‘cyborg’ evokes visions of a Frankenstein-ish creation, such as the Terminator in the 1980s movie of the same name, or the half policeman, half robot of the movie Robocop.

Yet robots or artificial intelligence (AI) in the context of asset management are somewhat less dramatic.

“Artificial intelligence is a continuum,” Russell says in an interview with [i3] Insights.

“There is a whole lot of noise out there and either people get really excited and think it can solve everything or people tend to think that AI or quantitative rules have little value and override them with human judgment too much.”

In fact, multiple studies have found that one of the main problems in people dealing with machines is that in many cases they think they can do better than machines, when in fact they cannot.

There are literally hundreds of studies that show human judgement fails to surpass simple algorithms in a whole lot of domains

“There are literally hundreds of studies that show human judgement fails to surpass simple algorithms in a whole lot of domains,” Russell says.

“These studies are often not even investigating sophisticated artificial intelligence; it is often a simple, decision-making rule that combines one or two factors in a simple linear equation. Can you beat that? In many cases, you can’t.”

It is a notion that hasn’t escaped those who have been able to exploit quantitative tools successfully.

In a recent New Yorker article about Renaissance Technologies founder Jim Simons, the subject of the article entrusted the author with the key to his success: “He never overrode the model. Once he settled on what should happen, he held tight until it did.”

Overriding algorithms on the basis that they lack insight or intuition can be a big problem, but so is the opposite: assuming they are more accurate than they really are and failing to override them when required, Russell says.

“We seem to be at loggerheads: we often either rely on it too much or we override it too much.

“How do we bring the two together; to collaborate rather than to compete? This seems an under-explored area, particularly in asset management.”

In his book, Russell describes two models of human/machine interaction: the Terminator model, where computers make most of the investment decisions and humans simply check, adjust or augment the models, and the Iron Man model, where human investment teams leverage technology to enhance or to systemise their decision-making.

Russell argues the most appropriate model will depend on the circumstances, with each approach having advantages over the other in some situations, for some types of decisions and for some decision-makers.

For example, he sees a more important role for the Iron Man model for growth stocks, for private or less developed markets, and for small caps. qualitative research is still important, but where technology provides a consistent framework for applying research findings.

The opportunity here is not necessarily to find the ultimate trading algorithm, but to create the optimal decision-making process for the circumstances by having a rich and nuanced understanding of the interaction between human, machine and team decision-making processes.

And that is where behavioural finance comes in.

Human biases play an important role in how people deal with complex problems and, in extension, how they deal with machines.

Often with decision-making research you’ll see that when you do a regression analysis or run a randomised control trial, what people tell you is important is not what actually drives their decisions

One problem with humans is that what is important to one person might not be to another, even though they both believe they are following the same process.

“In the workshops I run with asset managers and analysts, I sometimes ask people the question: If you can only pick three pieces of information to evaluate a stock, which would they be? I often get very different answers, even within an investment team,” Russell says.

“You might get someone who says: ‘Okay, I want some sort of value measure, let’s say price/earnings ratio, I want some profitability measure, so tell me its return on invested capital, and maybe some idea of momentum, so give me the last 12 months of share price movement.’

“You can think: ‘Okay, those are big-ticket things.’ Fama and French might say: ‘Yes, these are three factors that are somewhat akin to our five-factor model.’

“But then you will have somebody in the team saying: ‘Hold on, you don’t even know what this thing does. You don’t know what industry it is in or anything about the company at all.’

“Often with decision-making research you’ll see that when you do a regression analysis or run a randomised control trial, what people tell you is important is not what actually drives their decisions.

“This to me is a huge blind spot in qualitative decision-making and presents an opportunity to use machines to enhance human judgment.”

Analysis Paralysis

Part of the problem is that investing revolves around dealing with large data sets and complex problems. The temptation to keep digging for more information is very strong.

“How do you deal with noise? If you draw a chart where the vertical axis is your decision-making accuracy and your horizontal axis is the number of additional pieces of data or information, what you get is a line that increases dramatically when you give people what they have identfied as the first, second and third most important pieces of information,” Russell says.

“But then you get a rapidly decreasing return on information and it starts tailing off because you have to deal with issues such as overfitting, distraction and false positives.

“So it is a matter of really deciding what to focus on. Where do I stop before I get analysis paralysis?

“It is very easy to be distracted by piece of information number 100, when you are not really sure what the main drivers are and you don’t have a process where things get ordered in the right way.

“You need some type of weighting system, where you say this gets a 50 per cent weighting, say, this 25 and this 25 and the rest zero. Make sure that process is clear.”

The Importance of Regret

Dr Alistair Rew, Head of Alpha Strategies at AMP Capital, makes extensive use of quantitative tools and, to a lesser degree, machine learning. He helped Russell in providing comments and feedback on his analysis, some of which are incorporated in the book.

Rew spends a lot of time thinking about human biases in dealing with large data sets and quantitative tools.

“We [Simon and I] discussed analysis paralysis in some detail because in our industry we have a lot of analytical people and so there is a human bias to rely on more data,” he tells [i3] Insights.

“Even though I’m a mathematician, I don’t believe in overanalysing decisions, because there is also another human bias and that is regret.

“You need to understand what makes you regret a decision.

“If you have 75 per cent of the information, then a very analytical person might look for the other 25 per cent. But I would recommend people to get to 75 per cent and then identify the three to five questions that would make you change your mind.

“That way you don’t have to find answers to all questions, but just to the ones that make you change your mind.”

After all, if you have five answers that confirm the same thing, then you really only have one answer, he argues.

Rew believes in the chess analogy that a combination of mediocre people and mediocre machines can beat grand masters, but he is not interested in mediocrity.

“Imagine putting a group of really good people with a group of really good machines; I think it has to be done,” he says.

If we can be marginally better than the best person out there, then clearly we are going to be the best at what we do

But even in this ideal combination, progress is still likely to be incremental, he says.

“When you look at all the incremental changes that have occurred in our lives and you add them up, you can see that that has led to quite dramatic changes,” he notes.

“I suppose what I’m saying is that in the investment world we can look at these incremental changes, these 1 per cent improvements, and if you have a lot of these incremental changes, then can you imagine the improvement for the client?”

Rew is convinced current trends in technological improvements, for example, the recent advancements made in machine learning, have the ability to radically change the industry, but not in the near future.

“Do I think there is potential for all of what we have been talking about to dramatically change the face of our industry in five to 10 years’ time? Yes, I really do,” he says.

“But in the meantime we can use these things to make meaningful changes to what we do, how we do it and the cost of what we are doing.

“Given how competitive the landscape is, if we can be marginally better than the best person out there, then clearly we are going to be the best at what we do.”

Simon Russell will be speaking in more detail about human and machine decision-making at the [i3] Luncheon series, to be held on 27 March in Melbourne and 28 June in Sydney. For more information click here.

Cyborg: How to Optimally Integrate Human and Machine Investment Decision-making

By Simon Russell, with contributions from Dr Alistair Rew

154 pp. Publicious Pty Ltd. 2017

Amazon listing

__________

[i3] Insights is the official educational bulletin of the Investment Innovation Institute [i3]. It covers major trends and innovations in institutional investing, providing independent and thought-provoking content about pension funds, insurance companies and sovereign wealth funds across the globe.