Not all problems are computable and there is good reasons to believe that many investment problems fall into this category, Professor Carsten Murawski of the Brain, Mind & Markets Lab says.

The idea that financial problems require a purely rational approach is a fallacy.

People use shortcuts all the time and there is good reason for this: the brain has a finite capacity to calculate problems.

‘But we can use computers!’ I hear you think. And of course you are right: we can. Yet even then there are limitations.

Computers run out of memory, too, when calculating certain complex problems. And many problems are far more complex than we think they are.

We are so used to the way we make decisions that we forget the brain doesn’t just draws on reason, but also on emotions, heuristics, personal preferences and all kinds of other shortcuts.

During a recent [i3] Luncheon, Professor Carsten Murawski of the University of Melbourne’s Brain, Mind & Markets Lab, showed how quickly simple problems pursued purely logically become calculative monstrosities.

He illustrated his point at the hand of the following example.

If you have enough money to buy three pieces of fruit and you want to ascertain the best way of spending your money, then you could calculate how many different ways you can combine these pieces of fruit.

Then you must compare the different combinations to see which choice of fruit would give you the best bang for your buck.

Us humans quickly discard a whole set of possibilities. We don’t count duplicate combinations; we find that combining an apple with a peer is the same as combining a peer with an apple, but computers don’t make that assumption unless you specifically tell them. Mathematically, they are two different solutions.

We also have preferences. You may not like bananas, or maybe you like most fruit but still prefer a peer over a banana. Or maybe you just ate a banana and so you want something different this time. Machines don’t think like this.

So let’s assume a purely mechanical approach to calculating the number of ways you can combine three pieces of fruit and then look at the number of comparisons you would have to do to cover all possibilities.

If you have three pieces of fruit and you want to know all the ways in which these can be combined, including using only one, two or no pieces of fruit, then mathematics tells us that the number of ways they can be combined is 2 to the power of 3, which is eight ways.

By the time we have calculated the number of possible combinations, you would probably already have made up your mind about which fruit you would prefer.

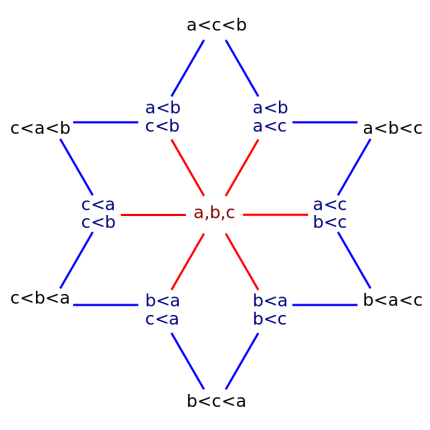

But for a machine to come up with the optimum combination, it would have to compare all the different combinations individually and the number of comparisons you would need to do between these eight combinations to make a fully informed choice is 13.

All the 13 comparisons of 3 item combinations. Source: wikipedia

In enumerative combinatorics, these comparisons are calculated using Fubini numbers, which count the number of weak orderings on a set of elements. And they quickly become very large.

“If the number of items a supermarket stocked was 10, like a mini, mini Aldi, the number of different combinations of items that you could form, if you bought every item only once, is about 1000,” Murawski said.

“The number of sets that you would have to compare, in order to find the best one is about 102 million,” he said.

Now, if you keep in mind that the average supermarket in Australia stocks about 40,000 items, then you quickly realise that the number of comparisons you’d have to make as an unemotive machine is more vast.

“It is more than the number of atoms in the universe,” Murawski said.

Alan Turing

It was Alan Turing – the famous mathematician who was able to decipher German encryption during World War II – who proved that some mathematical functions were uncomputable.

“In the 1930s, there were some big open questions in mathematics that people around the world were trying to solve. One of the biggest questions was whether mathematics was computable,” Murawski said.

“Is mathematics just a sequence of logical operations? In other words, could you program an algorithm for any mathematical proof out there?

“Very famously, Alan Turing showed that that was not the case. There are certain mathematical proofs out there for which it would take an infinite number of logical operations, and therefore time, to solve.

“Not everything that can be written down mathematically, for example the solution to an optimisation problem, can also be computed,” he said.

In finance and economics, many problems don’t have a purely logical solution either, Murawski said. Not all finance problems are computable.

“If we look at the types of problems that economists are interested in, such as optimisation of utility or what markets do, a lot of these problems are not just hard, they are not computable in the Turing sense. It might take an infinite number of calculations to solve them.

This is also true for certain financial products, Murawski said.

“There is a paper by a colleague at Princeton, Markus Brunnermeier, that showed that a lot of CDOs that were sold in the lead up to the financial crisis, where not computable. They were so complicated that if you wanted to solve them in the perfect way that mathematical finance dictated, you couldn’t do it,” he said.

Coping Mechanisms of the Brain

In finance, we are aware that people have biases that influence their economic choices. Often we tend to think of these as ‘irrational’, stemming from the idea that over the course of human evolution it has been a relatively short time that people will have to make monetary decisions and so our brains are simply not equipped to deal with them well.

“Behavioural economics has been wildly popular as a field and one of the main contributions has been the documentation of cognitive biases, a systematic deviation of people’s behaviour from rational choice,” Murawski said.

“Behavioural economists today have identified about 150 of them.

“The way we often think about these cognitive biases is as misbehaviour. They have a negative connotation, because people are supposed to be rational and when they aren’t we call them irrational.

“It is almost a ‘Taxonomy of Misbehaviour’, because that is how economists think about that,” he said.

But Murawski said there is a flaw to this kind of thinking.

“If almost every behaviour that we observe is a deviation from our theory, that doesn’t mean there is something wrong with people’s behaviour. What it really means is that there is something seriously wrong with the theory.

“We are convinced that a lot of these cognitive biases are consequences of information constraints or complexities.

“The problems we face differ in the level of complexity. We can measure complexity and we know that there are boundaries beyond which problems become really hard or even impossible for people to solve,” he said.

In other words, there are good indications to believe that cognitive biases are a way for the brain to deal with complexity.

But perhaps it is equally true that many investment problems can be defined but simply cannot be solved, as they are not computable.

Professor Carsten Murawski spoke at the [i3] Luncheon, co-hosted by Perpetual, in September 2019 about problems that you can define but which are not computable. To see the photo gallery of the event, please click here.

__________

[i3] Insights is the official educational bulletin of the Investment Innovation Institute [i3]. It covers major trends and innovations in institutional investing, providing independent and thought-provoking content about pension funds, insurance companies and sovereign wealth funds across the globe.